Flux

Flux:: sound and picture development was founded in the 1990’s during the early days of digital audio software workstations, collaborating with Merging Technologies in the creation of Merging’s now well renowned products.

18 Products by Flux :

Alchemist

Alchemist is the ultimate dynamic processing tool providing full control throughout the many steps of processing. Its wide range of uses stretches from : • simple...

BitterSweet Pro

BitterSweet Pro is the result of the improved algorithms found in Flux’s acclaimed transient processor freeware (BitterSweet), that has been used by hundreds of...

Elixir

Elixir is built upon the same algorithm as the legacy Elixir v3. It was meticulously designed to achieve a natural sounding result preserving the natural timbre of the...

Epure

Epure is a state-of-the-art five-band equalizer designed to provide the absolute finest audio quality within the domain of digital audio processing. It was carefully...

Immersive Essentials

The Immersive:: Essentials plugin bundle provides three powerful processing tools for immersive mixing and content production. They now include support for Dolby...

Ircam HEar

HEar provides faithful reproduction of a stereo or surround mix with a pair of conventional stereo headphones. It relies on proven technology to model the various...

Ircam Verb

An algorithmic room acoustics and reverberation processor built in a modular way, with a recursive filtering based reverberation engine that reproduces and synthesizes...

MiRA Live

Live mix with confidence in a reactive, real-time environment with MiRA Live, part of the MiRA analyzer family of audio analysis and metering applications. MiRA Live...

MiRA Session

Get your mix RIGHT with the MiRA Session spectrum and metering system, part of the MiRA analyzer family of audio analysis and metering applications. Whether you are...

MiRA Studio

With MiRA Studio, mix with confidence and be in full control in a reactive, real-time environment. MiRA Studio is the first immersive audio analyzer with support for...

Pure Compressor

The Pure Compressor Plug-in offers everything you would expect from a compressor, ranging from clean transparent subtle processing to classic heavy pumping. In...

Pure DCompressor

Pure DCompressor provide the tools for restoring the original dynamics of an audio track. The dynamics processing algorithms are designed to automatically increase...

Pure DExpander

Pure DExpander is a carefully designed plug-in to de-expand audio material by magnifying the spatial details and enhancing the low level information. This results in...

Pure Expander

Pure Expander is a powerful versatile processor capable of anything from mild expansion to hard noise gating, perfectly suited for soft downward expansion. It can...

Pure Limiter

Pure Limiter makes transparent limiting easy. A dramatic increase of the average audio level can now be accomplished without damaging the perceived audio quality. The...

Solera

The Solera dynamic processor combines the power of a compressor, expander, de-compressor and a de-expander. All four are available directly through the user interface...

SPAT Revolution Essential

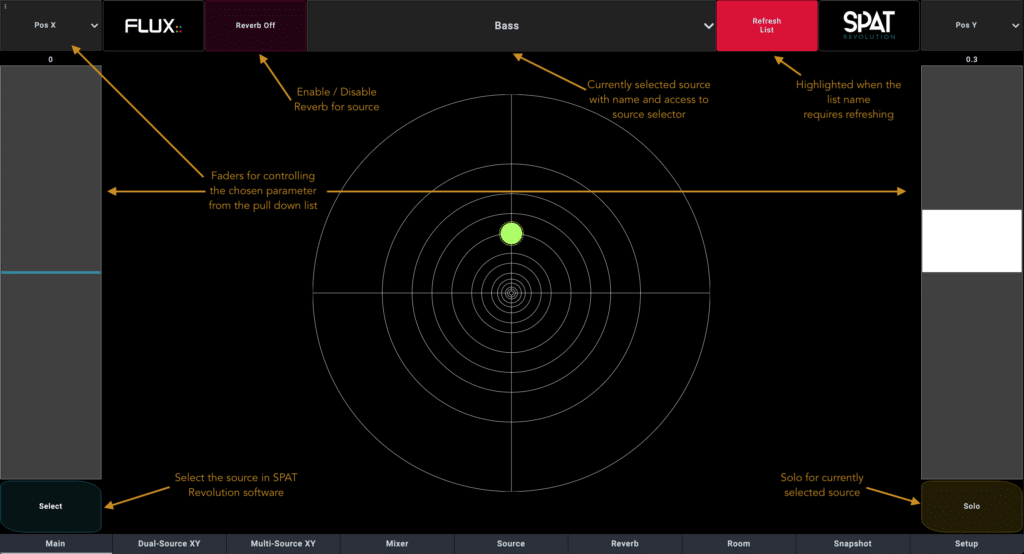

From Creation to Real-time Immersive Delivery Empowering artists, sound-designers and sound-engineers virtually unlimited possibilities to design, create and mix an...

Syrah

Syrah, based on the legendary algorithms behind the Alchemist and Solera processors, has been a prised possession for many engineers. Designed to react in real-time...5 Bundles by Flux :

EVO Series Pack Bundle

The essential element of all analog consoles is the Channel Strip and the philosophy behind it is to be able to be efficient, fast, and making things sound great...

Ircam Trax Bundle

A suite of three processors developed over the years by the sound analysis/synthesis team at IRCAM. Their goal was to provide users with signal transformation...

MiRA Ultimate Bundle

MiRA Ultimate from FLUX:: is the pinnacle of the MiRA audio analysis suite of software because it packages all three MiRA applications - MiRA Live, MiRA Studio, and...

Studio Session Pack Bundle

Every plug-in contained in the "Studio Session Pack" uses exactly the same engine as their respective "professional" versions but they have been limited to fit ONLY...

Ultimate Plugin Pack Bundle

The FLUX:: Ultimate plugin pack which replaces the Full Pack 2.2, includes All FLUX:: Series plugins, EVO:: series (5 plug-ins), Ircam Verb, Ircam Verb session and...Latest News from Flux :

FLUX:: UP YOUR STUDIO NOW!

Check out this year’s spring promotion offers on Studio Session Pack and the EVO:: Series Pack!

The post FLUX:: UP YOUR STUDIO NOW! appeared first on FLUX:: Immersive.

Read More

MiRA 25.04 Service Release – Available Now

The FLUX:: team is excited to announce MiRA 25.04, the first update since the product introduction in January this year. This new release is packed with enhancements designed to elevate your user experience and to refine and improve existing features.

The MiRA 25.04 release is a free update for all users with a valid MiRA perpetual license or active MiRA subscription plan and is available now in FLUX:: Center.

IO Setup

We’ve added an in-app help feature to the IO setup page. This helpful toolbar will guide you through configuring input and output settings for three typical use cases: studio, live show, and system tuning. Additionally, when modifying the input reference number of channels, an automatic offset for additional channels is now applied.

Live and Capture

We’ve made numerous optimizations to enhance your experience:

- Drag and drop captures between sessions

- Improved transfer function with octave phase smoothing

- Frequency-dependent thresholding and auto-pause display

- Enhanced robustness of the analysis

SampleGrabber

The robustness of the MiRA SampleGrabber has been significantly improved, particularly in the following cases:

- Handling loops in DAWs

- Reconnection stability

- LUFS measurement reliability

- IO setup configuration

Complete Release Notes

In addition to these key updates, we’ve focused on improving the overall stability and consistency of the software.

For detailed information, please refer to the full release notes here:

https://shop.flux.audio/en_US/page/mira-release-notes

The post MiRA 25.04 Service Release – Available Now appeared first on FLUX:: Immersive.

Read More

FLUX:: Expands Audio Analysis Capabilities With The New MiRA Family Of Analyzer Software

Empowering Audio Professionals with Real-Time Precision, Immersive Visualization, and Customizable Tools for Live, Studio, and Calibration Applications

NORTHRIDGE, Calif. — HARMAN Professional Solutions, the global leader in audio, video, control, and lighting systems announced the launch of the MiRA software family designed for diverse audio analysis and measurement applications. Whether it’s mixing and mastering, system calibration, or loudness metering, MiRA combines precision, versatility, and innovation to meet the needs of today’s audio professionals.

With support for multi-channel immersive audio deployments, MiRA redefines what’s possible in real-time audio analysis. Its focus on superior visual response enhances audio capture, delivering unparalleled processing for exceptional visualization.

A Stand-Alone Solution for All Audio Applications

The MiRA family is built on the legacy of the acclaimed FLUX:: Analyzer software, a trusted name in music production, post-production, and audio mastering for over a decade. Incorporating FLUX:: proprietary Sample Push technology, MiRA extends integration capabilities by enabling seamless hardware connections through ASIO and Core Audio. Samples are broadcast to the standalone MiRA application via local or standard IP networks, simplifying routing challenges in multi-channel immersive audio setups.

“The MiRA software family represents a whole new standard in audio engineering and we’re proud to deliver to our customers what we believe is the brightest star in the audio analysis universe,” said Gaël Martinet, Director of Software Development, HARMAN Professional Solutions. “The software engineering of MiRA was built on the foundation of our legacy analyzer and is designed to serve the needs of audio professionals well into the future as their go-to tool.”

Product highlights for MiRA include:

Customizable and Intuitive Interface: Fully customizable workspaces allow users to tailor layouts and settings to their specific preferences, simplifying workflows for professionals in live and studio environments.

Sample Push Technology: Proprietary technology seamlessly integrates with DAWs, mixing consoles, and immersive processors, enabling instant, precise analysis.

Advanced Real-Time Features: Includes tools for Transfer Function readings, Magnitude, Phase, Coherence traces, live impulse response, and delay computation.

Nebula Spatial Spectrogram: A groundbreaking visualizer that combines spectrum analysis with vector scope technology, enhancing localization and immersive audio monitoring.

Adaptive Resolution Transform (ART): Provides accurate, readable, and responsive transfer function measurements.

Flexible Multi-Microphone Capture: Supports up to 23 microphones with features like pairing mode for enhanced coherence between floor and ear levels, as well as real-time averaging and delay finding.

The MiRA analyzer family is available for every need that includes three tailored variants:

MiRA Live: Ideal for real-time live mixing with instant visual control, SPL/Leq metering, and pre-defined show layouts.

MiRA Studio: Designed for mastering and post-production applications with precision tools for detailed analysis.

MiRA Session: Ideal for music and audio content production in stereo with a range of analysis tools including loudness metering.

The MiRA Ultimate bundle is also available, combining all three variants into a single comprehensive package.

As with all FLUX:: products, loyalty upgrade paths are available for users of previous Analyzer products. For more information on the MiRA family, visit https://www.flux.audio/mira-analyzer.

ADDITIONAL DETAILS

For complete product details and specifications, please visit:

ABOUT HARMAN PROFESSIONAL SOLUTIONS

HARMAN Professional Solutions engineers and manufactures audio, video, lighting and control (AVLC) products for entertainment and enterprise markets, including live performance, audio production, large venue, cinema, retail, corporate, education, government, hospitality, broadcast and more. With leading brands including JBL Professional®, AKG®, Martin®, AMX®, Soundcraft®, BSS Audio®, Crown®, dbx Professional®, and Lexicon Pro®, HARMAN Professional delivers powerful, innovative and reliable solutions that are designed for world-class performance. HARMAN Professional Solutions is a Strategic Business Unit of HARMAN International, a wholly-owned subsidiary of Samsung Electronics Co., Ltd. For more information, visit http://pro.harman.com/.

GLOBAL

David Glaubke

Director, Global Corporate Communications

HARMAN Professional Solutions

+1 (818) 470-7322

david.glaubke@harman.com

The post FLUX:: Expands Audio Analysis Capabilities with the New MiRA Family of Analyzer Software appeared first on FLUX:: Immersive.

Read More

SPAT Revolution 25.01 With ADM-OSC V1.0 Compatibility, Relative OSC And MiRA Support

SPAT Revolution 25.01 with ADM-OSC v1.0 compatibility, relative OSC and MiRA support

The SPAT Revolution 25.01 release contains important new features, including ADM-OSC v1.0 compatibility, relative OSC, and support for FLUX:: MiRA, as well as maintenance and improvement updates. It is a free update available now in FLUX:: Center for all users with a SPAT Revolution perpetual license or an active subscription plan.

ADM-OSC V1.0 compatibility

Following the ADM-OSC v1.0 recently introduced at AES New-York, SPAT Revolution is fully compliant with this new standard with the 24.12.50436 build.

OSC relative message

This new feature brings the possibility of acting on a parameter without knowing its current value. The syntax is the same, just adding a keyword on the OSC pattern to make it relative.

MiRA integration

Following the release of MiRA, our new analyzer software release, the send from SPAT to it has been improved and updated.

Full release notes:

New Features

Add OSC relative support. Example: “/source/1/gain -10.0” will set the gain to –10, while “/source/1/relative/gain -10.0” will decrease the gain OSC pattern.

Add full compatibility with ADM-OSC v1.0, including modified ADM-OSC presets with new port numbers, reviewed OSC transformations with some new parameters, and a new way to edit the maximum distance of the ADM-OSC normalized value.

MiRA integration

Improvements

Improve support of high-channel-count soundcards

Reduce CPU consumption of inputs and outputs

Support of ASIOSTInt32LSB24

Mac Intel – Performance improvements

Bug fixes

App - Core Audio headphones and loudspeakers

Core - Fix crash when deleting a snapshot

Core – If the number of output channels of the device is below the number of channels of the output blocks, some channels are not outputed

Item page - Changing delay in meter or feet does not work

New preferences path is not added to the default Python path list

OSC – Message /global/project/dump is not working

OSC – -1 does not consider the room distance scaling

rttrPM - Position is sluggish while receiving rttrPM

Setup page - Can’t distinguish the difference between both the ‘record audio’ buttons in a transcoder

Known Issues

Most Important

– App – Bad GUI initialization on Windows with intel graphics chipsets

– PI – AAX - Renaming the send track in Pro Tools cuts the audio if verb switches are not the default one.

– S6L integration - SPAT Send track name is not retained properly and sent every time the UI opens (erasing your SPAT Source name).

– SPAT Send - Renaming an instance cut the audio in Pro Tools / Windows10. Have to re-enable the LAP to fix

Important

– App – A process still running after quitting SPAT Revolution when ASIO4ALL is used as audio driver on Windows

– Automation parameter issue when entering a value manually in Nuendo

– Display issues when OS display scaling is under the default resolution

– On reverb preset density change, sound is cut

– SPAT Plugins – Crash on reloading mixer with Pyramix versions earlier than 14 & Ovation versions earlier than 10

– SPAT Plugins – Tail reverb is not reported to the plugins in LAP when IO devices are “None” in SPAT Revolution

– SPAT plugins – The second OSC output status is not retained in AAX / AAX VENUE after a reboot or an AAX host rack reset

The post SPAT Revolution 25.01 with ADM-OSC v1.0 compatibility, relative OSC and MiRA support appeared first on FLUX:: Immersive.

Read More

Flyover In Chicago Takes Off With Help From FLUX:: SPAT Revolution And JBL Professional

HARMAN Professional Solutions’ immersive audio software, loudspeaker, amplification, and signal processing brands contribute to bring sensory immersion experience to life.

CHICAGO – At its Spring 2024 opening, Flyover in Chicago became the newest of Flyover Attractions’ immersive indoor flying rides, joining existing installations in Las Vegas; Vancouver, Canada; and Reykjavik, Iceland, that transports guests to the planet’s most epic places through exhilarating flying journeys. Located within Chicago’s landmark waterfront Navy Pier, the multi-sensory experience, which incorporates leading drone technologies along with impressive aerial shots and first-person narratives, showcases the city from perspectives never seen before.

Flyover’s signature Chicago journey will be shown on an impressive 65-foot spherical screen with flight motion seats engineered to swoop, dip and turn, giving guests the feeling of flight. The attraction will transport 61 guests at a time, with complete sensory immersion using wind, mist and scents, as fliers hang suspended.

The breathtaking ride employs a massive number of audio sources, which are controlled and dynamically spatialized by the advanced audio object and Wave Field Synthesis features of FLUX:: SPAT Revolution immersive audio software, then played through a highly customized system of JBL loudspeakers in a nearly spherical configuration.

The FLUX:: software and JBL Professional loudspeakers are supplemented with Crown amplifiers, BSS loudspeaker processing, and control systems from HARMAN Professional, who also supplied extensive support and expertise to the project. Designing and building an attraction like Flyover is a highly complex undertaking, so the thrilling sonic world of Flyover Chicago is the work of a team of experts from several companies working closely together.

Immersive Entertainment

An extensive presentation occurs primarily in its own immersive theatre, which features a 10-foot diameter, circular “lollipop” screen in the center (serving guests on both sides), plus an elliptical screen wrapping 360 degrees around the audience. Sound designer/mixer Tim Archer of Masters Digital programmed SPAT Revolution to control the soundtrack, which plays through a system designed by Wels, Austria-based Kraftwerk Living Technologies (KLT), who also designed the main flight ride audio system.

Speakers are placed behind the perforated screens, with each side of the venue featuring the same speaker configuration: eight JBL COL800 column loudspeakers inside the lollipop facing out (for a total of 16 cabinets), and six JBL AC18/26 compact two-way loudspeakers behind the wraparound screen (for a total of 12). Low- frequency support is provided by four ceiling-mounted JBL ASB6115 subwoofers.’

Crucially, these are not arrays in which every loudspeaker receives the same signal; SPAT Revolution sends a discrete signal to each loudspeaker in order to implement Wave Field Synthesis and other reproduction techniques. Still, A source such as dialogue may need a large contribution of speakers for coverage or perceived sound size reasons, a situation which can create its own problems.

“When you’ve got eight speakers that are not in a simple array and all are receiving the same content, how do you get away with not having comb filtering?” asks Archer. “The answer is that you run the signal through SPAT. I pop one track of dialogue into SPAT and WFS spreads it across the eight columns. And you can be almost around the side of the lollipop before you lose the character of the sound; it’s extremely wide. WFS is killer. It’s a game changer.”.

A ring of 10 more AC18/26s mounted above the audience (five on each side of the room) acts as the primary source for presenting the music from composer Elliott Wheeler.

The Main Flight Ride

For the flight ride, guests are seated on one of three separate moving platforms which are vertically “stacked” in the room. Presenting realistic, well-balanced sound to every guest was the biggest challenge facing both Archer and KLT’s Philipp Hartl, responsible for Sales | Lead Audio System Design & Optimization.

“There’s three platforms, and that’s critical. We’re talking about people above, in the middle, and below,” reveals Archer. “That is probably the main thing that makes this mix so unique: traditionally, we are all based in the idea that the person mixing is on the same plane as the rest of the audience. You normally don’t have people located on three different planes.“

“But at Flyover Chicago you do, so the guests are having three different experiences. Since everybody’s not got the same visual perspective, they should not get the same audio perspective.” Complicating things further was the sheer number of moving elements, which defined a massive mix process. “I am sitting down with just over a thousand tracks, about 600 for music and about four hundred and change for sound effects,” Archer reports.

With the platforms in constant motion, object audio was the only way to place a source and have each person hear it properly. Two guests viewing the same visual element from different platforms need separate mixes that accurately localize the sound for their positions…while the platforms continue to move. Traditional multichannel mixes simply cannot accomplish this. For this reason, localization in SPAT Revolution is not viewed in terms of routing sounds into loudspeakers, but, rather, sending them to “pan points” or virtual speakers to synthesize artificial wavefronts.

What’s more, some musical events relate to visual elements, necessitating moving music sources through space, too. “I’ve got a trumpet player on top of the Wrigley Building, and when the camera flies by him, his trumpet sound has got to whip past us,” Archer details. “Every single thing in the mix moves.” To facilitate this, composer Wheeler and his engineer went to the trouble to deliver the music to Archer already in the form of a SPAT Revolution session.

In his sound system design, Hartl faced the same issue of trying to supply sound appropriately to three continuously moving platforms. “As a practical matter, it’s not possible to achieve the exact same coverage at every seat on each platform,” Hartl explains, “so one of the really tricky things was to find a middle way to tune the speakers that minimized the amount of compromise made at any individual seat.”

Hartl used 34 JBL AM7215-series high power two-way loudspeakers, deployed in four different vertical layers, plus four JBL ASB7128 dual 18-inch subwoofers to furnish prodigious amounts of low-frequency effects. As in the pre-show theatre, SPAT Revolution rendered a different signal feed for each loudspeaker. To ensure clear rear localization, a pair of JBL Control 23-1 ultra compact speakers is mounted on each seat back. Once the content was generated and the system tuned, the presentation was implemented in a show control system for playback by Miami-based Smart Monkeys.

Teamwork is Key

Bringing such an immensely complex project to a successful conclusion involves plenty of tweaking and problem-solving. As a central contributor to the project, HARMAN gave close support to all the teams involved, with Flux’s Hugo Larin being particularly deeply involved in the design and programming of the immersive audio. “Hugo’s involvement was what got the show across the line,” compliments Eric Sambell, Flyover’s Global Director of Construction and Entertainment. “He was knowledgeable and really jumped in, even with issues that weren’t directly involved with SPAT, like some aspects of design and workflow. It was a really great

value-add.”

Teams from Flyover and all of the vendors reported tremendous cooperation and support from all parties, which made a difficult, stressful project actually fun. “It was great to see the different companies work together and not against each other, which was the case at Flyover,” enthuses Hartl. ”We learned from each other, and that was the best thing which could have happened there.” Archer was just as impressed.’ “The spirit of collaboration started right with the management of Flyover, who assembled teams of people that could and would work together.”

In the end, of course, the effort is not about the sophisticated systems or even the teams, but providing the guests an amazing experience, as Sambell highlights. “The technology has to be so good that it disappears, like it isn’t even there, so that guests enjoy a seamless experience from the moment they walk in the door to when they walk out afterwards,” he asserts. “We don’t want guests thinking about the technology or where they are, we just want them to be present in what’s happening.”

From Construction to Immersion

The magic of Flyover is made possible through planning, collaboration and massive amounts of hard work. In this episode, Flyover’s construction and integration team explore how an old IMAX theater on Navy Pier became a one-of-a-kind immersive experience.

Connect with your curiosity, amplify your senses and feel inspired to explore. Our flight journeys transport you over iconic global destinations, so you can experience the world from a whole new perspective. Sit suspended in front of a massive spherical screen and feel the unmistakable thrill of flight, while mists, winds and scene-setting scents deepen your immersion.

Harman Pro Case Studies – Flyover in Chicago, USA

The post Flyover in Chicago Takes Off With Help From FLUX:: SPAT Revolution and JBL Professional appeared first on FLUX:: Immersive.

Read More

SPAT Revolution 24.08 – Now With Support For JBL Venue Synthesis

SPAT Revolution 24.08 – Now with support for JBL Venue Synthesis

This SPAT Revolution 24.08 release is a feature update release providing support for the JBL Venue Synthesis 3D acoustic simulation software models, and is a free update for all users with a SPAT Revolution perpetual license (from v22.09) or an active subscription plan.

JBL Venue Synthesis Support

Venue Synthesis .vsyn files (from v1.1) can now be imported directly into SPAT Revolution’s speaker editor on version 24.08 and above, and includes speaker arrangement optimization (layering tolerance, elevation correction) for the various loudspeaker elevations.

A SPAT Revolution Reference tool has been added to the Venue mode tool bar (Venue > Add > SPAT Revolution Reference). This new tool adds a user-adjustable reference position known as the SPAT Revolution reference to position the loudspeakers.

This new feature accelerates system design and simplifies the creation of immersive systems using Venue Synthesis and SPAT Revolution.

JBL Venue Synthesis

Venue Synthesis is JBL’s next-generation 3D acoustic simulation software, designed from the ground up to allow system designers and engineers to accurately predict the acoustical and mechanical performance of JBL sound systems.

https://jblpro.com/en/products/venue-synthesis

The post SPAT Revolution 24.08 – Now with support for JBL Venue Synthesis appeared first on FLUX:: Immersive.

Read More

SPAT Revolution 24.06 Service Release With New Tools: SPAT Remote And Chataigne Module

SPAT Release 24.06 Service Release with New tools: SPAT Remote and Chataigne Module

This SPAT Revolution 24.06 release is a service release with maintenance and improvement updates, and is a free update for all users with a SPAT Revolution perpetual license or an active subscription plan.

Release Notes

Bug fixes

- Fixed bug where SPAT could freeze when recalling snapshots

- Fixed reentrancy issue when recording automation in LAP

- VST3 automation improvements

- Fixed AAX instability problems

- Fixed bug where the position XYZ is not written in the SPAT Send when receiving OSC.

- More than 30 other bug fixes, including Ambisonics, Groups, OSC, and various UI fixes

Improvements

- Core – Refresh audio device when displaying the audio device list

- Core – Refresh network list when displaying the IP address list

- If a crash occurs when loading a session during the opening of SPAT Revolution, propose to open SPAT without loading the session

- OSC – Add OSC message to arm/unarm objects, to begin and stop the record, and for page navigation.

- 3D View – Add option to display only virtual or real speaker

Important: The SPAT Revolution preferences have been moved to a new folder

New Control Tools

Updated SPAT Remote 24.06

(requires SPAT Revolution 24.06 binary and latest OpenStageControl version 1.26.1)

- New selection by source name, with drop down menus

- New UI and organization for most of the panels

- Entirely new fresh design for the snapshot panel

- Color of the XY pads and the mixer now follows the color of the SPAT Revolution

- New grid layout for XY pads, ratios are maintained to 1:1 (square)

- Support for small screen devices

Chataigne Module – Open-source Community Created Tool

- Free and open-source software which aims to create a common tool for artists, technicians and developers who wish to use technology and synchronize software for shows, interactive installations or prototyping.

- Allowing SPAT Revolution to be natively integrated with Chataigne in the Community modules

- Allowing to control Sources, Rooms and Snapshots

This module is available in the Community Modules Manager inside the Chataigne Software

https://benjamin.kuperberg.fr/chataigne/en

The post SPAT Revolution 24.06 Service Release with New tools: SPAT Remote and Chataigne Module appeared first on FLUX:: Immersive.

Read More

Join FLUX:: At InfoComm 2024 In Las Vegas For An Immersive Experience With SPAT Revolution

Join FLUX:: at InfoComm 2024 in Las Vegas for an Immersive experience with SPAT Revolution

We welcome you to visit us at the Las Vegas Convention Center in the Harman booth #C8622, and, to join us for an Immersive experience with SPAT Revolution in Demo Room #N109 in North Hall.

Revolutionize Audio Mixing with SPAT Revolution

Discover the power of Immersive audio production with SPAT Revolution during our live demonstration. Listen to next-generation mixes, a way to transport and engage the audience with Immersive audio. Discover how Harman is engaged in immersive audio with SPAT Revolution, and preview the latest developments of the SPAT Revolution Immersive Audio Engine.

Elevating Audio Production from Creation to Delivery

SPAT Revolution is a state-of-the-art real-time immersive audio mix engine software, redefining the art of object-oriented and perceptual mixing, providing the means for intuitively positioning audio-sources in space, letting the acoustic signature of the room build the desired depth, independently from the output format.

Designed to integrate into virtually any workflow, and with full automation of the spatialization parameters, SPAT Revolution is at the forefront of audio production technology, offering unparalleled digital audio workstation enhancements. It excels in both live and studio environments by transcending traditional stereo and surround limitations, pioneering a novel audio mixing paradigm.

Register Now

Use VIP code HAR276 for a FREE Pass

https://www.infocommshow.org/

Harman International

Booth #C8622

Demo Room #N109

InfoComm 2024 – June 11th to 14th

Las Vegas Convention Center

The post Join FLUX:: at InfoComm 2024 in Las Vegas for an Immersive experience with SPAT Revolution appeared first on FLUX:: Immersive.

Read More

Voyages Indigenous Tourism Australia Tells Mala Ancestral Story Through State-Of-The-Art Flux:: SPAT Revolution Technology

May 2024, Australia

In order to bring to life a chapter of the Mala ancestral story, Madison AV worked closely with Auditoria Systems and the Aṉangu people to elevate storytelling to a level never seen before through the deployment of drone technology, lasers, projection lighting and a HARMAN immersive audio system for the Wintjiri Wiṟu Experience in Uluru, Australia.

“HARMAN delivers immersive audio solution for the world’s first multimedia storytelling experience on the Indigenous Aṉangu people; an immersive experience which includes drones, laser projection and an immersive audio solution pairing JBL loudspeakers with the SPAT Revolution spatial technology“

Jeff Shoesmith from Madison AV mentioned that HARMAN products were chosen due to their proven track record of installation in very demanding weather conditions. JBL loudspeakers were also found to be able to provide consistent frequency dispersion for accurate spatial imaging. Separately, SPAT Revolution was selected for its very powerful spatial engine with almost limitless options, and adapted very well to the layout of the system by providing accurate spatial imaging along with great sounding spatial reverberation.

“The project’s audio system was designed to complement the immense visual canvas that the night time sky provided for the drones with the score and narration played back over an immersive system surrounding the audience,” shared Luis Miranda, Audio Visual Consultant from Auditoria. “A six-channel frontal loudspeaker system using the JBL AWC series and an additional six channels of JBL Control 25AV rear loudspeakers were used to allow for accurate placement of sound images. The show was mixed on site using the SPAT Revolution spatial audio engine, which provided the necessary reverberation due to the system being installed outdoors with no natural reverberation available.”

One of the biggest challenges for this project was the installation of this system in the middle of the Australian desert which had to be done in a very remote location with limited weather shelter, temperature control or access to facilities. They also had to bear in mind that the system had to be robust so that it could be left outdoors in extreme weather conditions for years with little maintenance.

A spokesperson for Voyages Indigenous Tourism Australia mentioned how the SPAT Revolution audio spatial technology transcends the limitations of traditional stereo and surround sound formats which intensifies storytelling and captivates the audience.

“HARMAN is extremely honored be able to play a part in the world’s first of its kind cultural and multimedia sensorial experience,” said Amar Subash, VP & GM, HARMAN Professional Solutions, APAC. “As one of the first large scale immersive installations of JBL and Flux::, we have only scratched the surface in terms of the many incredible applications of immersive audio in leisure attractions.”

https://pro.harman.com/news/voyages-indigenous-tourism-australia-tells-mala-ancestral-story-through-state-of-the-art-flux-spat-revolution-technology

The post Voyages Indigenous Tourism Australia Tells Mala Ancestral Story Through State-Of-The-Art Flux:: SPAT Revolution Technology appeared first on FLUX:: Immersive.

Read More

Experience SPAT Revolution With FLUX:: At The ISE 2024 In Barcelona

Come and join the FLUX:: team at the ISE 2024 exhibition in Barcelona. This will be the official first outing of FLUX:: with Harman International, Hall 3 – 3F300, demonstrating their SPAT Revolution Immersive software engine.

The demonstration will include many of the newly introduced features such as media recording, object group function, EASE (AFMG) XLD import with orientation, a range of new 3D view options and speaker arrangement categories for Apple and MPEG-H.

Discover the power of SPAT Revolution for immersive audio production, and how it integrates with major host environments like Avid Pro Tools, Nuendo, and QLab, providing comprehensive immersive audio mixing for live and prepared content with increased spatial resolution.

Register Now and get your ticket for Free

Harman International, Hall 3 – 3F300

About SPAT Revolution

SPAT Revolution is a state-of-the-art real-time immersive audio mix engine software, redefining the art of object-oriented and perceptual mixing. This makes it possible to intuitively position audio-sources in space, letting the acoustic signature of the room build the desired depth, independently from the output format. Designed to integrate into virtually any workflow, and with full automation of the spatialization parameters, SPAT Revolution offers a wide range of possibilities, empowering artists, sound-designers, and sound-engineers virtually unlimited possibilities to design, create and mix an outstanding immersive experience.

The post Experience SPAT Revolution with FLUX:: at the ISE 2024 in Barcelona appeared first on FLUX:: Immersive.

Read MoreLatest 50 Videos from Flux :

'FLUX:: Immersive: The New Era of Spatial Audio' Event & Demonstration in Thailand Sep 19, 2025

FLUX:: & JBL Professional Audio Demo Room Captured at Integrated Systems Europe | Highlights Feb 24, 2025

MiRA Studio - Multi-Channel Analysis | Audio Analysis & Metering Software from FLUX:: Jan 28, 2025

MiRA Live - Microphone Pairing Mode | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA - User Interface | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA - Layout Creation with Customizable Workspaces | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Live - Introduction | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA by FLUX:: - Sample-Push Technology | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Session - Introduction | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Live - Introduction to System Analysis | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Studio - Introduction | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Live - Quick Look | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Family - Quick Look | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Session - Quick Look | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

MiRA Studio - Quick Look | Audio Analysis & Metering Software from FLUX:: Jan 23, 2025

FLUX:: SPAT Revolution 24.08 Update | Integrates w/ JBL Venue Synthesis Acoustic Simulation Software Aug 16, 2024

FLUX:: | Using SPAT Revolution for Dolby Atmos Delivery | Webinar Aug 1, 2024

FLUX:: | Pt. 2 - Pro Tools Integration with SPAT Revolution - Routing Folders and Aux I/O | Webinar Aug 1, 2024

FLUX:: | Pt. 1 - Pro Tools Integration with SPAT Revolution - Introduction | Webinar Aug 1, 2024

FLUX:: | Integrating Spacemap Go with SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Integrating QLab with SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Integrating Ableton Live Tools with SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | ReaVolution Tools for Reaper Integration with SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Understanding the OSC Integration of SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Using Wave Field Synthesis in SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Panning Technologies & Acoustic Simulation Presets in SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Dealing with Speaker Arrangements in SPAT Revolution | Webinar Aug 1, 2024

FLUX:: | Configuring Sessions in SPAT Revolution - Setup Page | Webinar Aug 1, 2024

FLUX:: | Introduction to SPAT Revolution | Webinar Jul 31, 2024

Webinar - SPAT Revolution Mixes to a Dolby Atmos Deliverable Dec 14, 2023

SPAT Revolution 23.11 Update Webinar Nov 23, 2023

SPAT Revolution - 23.08 Update Sep 22, 2023

Using Aux I/O Routing Folders - SPAT Revolution/Protools Webinar Jul 20, 2023

Pro Tools Integration with SPAT Revolution - Webinar Jul 6, 2023

Using Meyer Spacemap Go with SPAT Revolution - Webinar Jun 8, 2023

Ableton Live Tools for SPAT Revolution - Webinar May 25, 2023

EVO Touch - Introduction Mar 22, 2023

EVO Compressor - The Hidden Advanced Parameters Mar 8, 2023

Introduction to EVO:: Series Plugin Pack Feb 17, 2023

Mastering - Loudness Comparison Dec 9, 2022

Elixir - Multichannel & True Peak Limiter Dec 9, 2022

Ableton Live Tools for SPAT Revolution - From Single Machine to Dual Computer Setup Sep 28, 2022

Ableton Live Tools for SPAT Revolution - Rendering Sep 28, 2022

Ableton Live Tools for SPAT Revolution - Workflow Sep 28, 2022

Ableton Live Tools for SPAT Revolution - Installation & Setup Sep 28, 2022

Ableton Live Tools for SPAT Revolution - Introduction Sep 28, 2022

Crafting shows with QLab and SPAT Revolution Mar 30, 2022

Using WFS Wave Field Synthesis (Add-on) option with SPAT Revolution Ultimate Mar 16, 2022

SPAT Revolution 22.02 Release webinar + Q&A Mar 2, 2022

Analyze This! Session Analyzer v22.01 update Jan 21, 2022

About Flux :

Flux:: sound and picture development was founded in the 1990’s during the early days of digital audio software workstations, collaborating with Merging Technologies in the creation of Merging’s now well renowned products.

In 2007 Flux:: started releasing their own exquisite audio software product line tailored for demanding sound engineers, and has since then been focused on creating intuitive and technically innovative audio software tools, used by sound engineers and producers in the professional audio, broadcast, post production and mastering industry all over the world.

We're distributing Flux in the following 243 countries :

Afghanistan

Afghanistan Aland Islands

Aland Islands Albania

Albania Algeria

Algeria American Samoa

American Samoa Andorra

Andorra Angola

Angola Anguilla

Anguilla Antarctica

Antarctica Antigua And Barbuda

Antigua And Barbuda Argentina

Argentina Armenia

Armenia Aruba

Aruba Australia

Australia Austria

Austria Azerbaijan

Azerbaijan Bahamas

Bahamas Bahrain

Bahrain Bangladesh

Bangladesh Barbados

Barbados Belarus

Belarus Belgium

Belgium Belize

Belize Benin

Benin Bermuda

Bermuda Bhutan

Bhutan Bolivia

Bolivia Bosnia And Herzegovina

Bosnia And Herzegovina Botswana

Botswana Bouvet Island

Bouvet Island Brazil

Brazil British Indian Ocean Territory

British Indian Ocean Territory Brunei Darussalam

Brunei Darussalam Bulgaria

Bulgaria Burkina Faso

Burkina Faso Burundi

Burundi Cambodia

Cambodia Cameroon

Cameroon Canada

Canada Cape Verde

Cape Verde Cayman Islands

Cayman Islands Central African Republic

Central African Republic Chad

Chad Chile

Chile Christmas Island

Christmas Island Cocos (keeling) Islands

Cocos (keeling) Islands Colombia

Colombia Comoros

Comoros Congo

Congo Congo

Congo Cook Islands

Cook Islands Costa Rica

Costa Rica CÔte D'ivoire

CÔte D'ivoire Croatia

Croatia Cuba

Cuba Cyprus

Cyprus Czech Republic

Czech Republic Denmark

Denmark Djibouti

Djibouti Dominica

Dominica Dominican Republic

Dominican Republic Ecuador

Ecuador Egypt

Egypt El Salvador

El Salvador Equatorial Guinea

Equatorial Guinea Eritrea

Eritrea Estonia

Estonia Ethiopia

Ethiopia Falkland Islands (malvinas)

Falkland Islands (malvinas) Faroe Islands

Faroe Islands Fiji

Fiji Finland

Finland France

France French Guiana

French Guiana French Polynesia

French Polynesia French Southern Territories

French Southern Territories Gabon

Gabon Gambia

Gambia Georgia

Georgia Germany

Germany Ghana

Ghana Gibraltar

Gibraltar Greece

Greece Greenland

Greenland Grenada

Grenada Guadeloupe

Guadeloupe Guam

Guam Guatemala

Guatemala Guernsey

Guernsey Guinea

Guinea Guinea-bissau

Guinea-bissau Guyana

Guyana Haiti

Haiti Heard Island & Mcdonald Islands

Heard Island & Mcdonald Islands Holy See (vatican City State)

Holy See (vatican City State) Honduras

Honduras Hungary

Hungary Iceland

Iceland India

India Indonesia

Indonesia Iran

Iran Iraq

Iraq Ireland

Ireland Isle Of Man

Isle Of Man Israel

Israel Italy

Italy Jamaica

Jamaica Jersey

Jersey Jordan

Jordan Kazakhstan

Kazakhstan Kenya

Kenya Kiribati

Kiribati Korea-north

Korea-north Korea-south

Korea-south Kuwait

Kuwait Kyrgyzstan

Kyrgyzstan Lao

Lao Latvia

Latvia Lebanon

Lebanon Lesotho

Lesotho Liberia

Liberia Libyan Arab Jamahiriya

Libyan Arab Jamahiriya Liechtenstein

Liechtenstein Lithuania

Lithuania Luxembourg

Luxembourg Macao

Macao Macedonia

Macedonia Madagascar

Madagascar Malawi

Malawi Malaysia

Malaysia Maldives

Maldives Mali

Mali Malta

Malta Marshall Islands

Marshall Islands Martinique

Martinique Mauritania

Mauritania Mauritius

Mauritius Mayotte

Mayotte Mexico

Mexico Micronesia

Micronesia Moldova

Moldova Monaco

Monaco Mongolia

Mongolia Montenegro

Montenegro Montserrat

Montserrat Morocco

Morocco Mozambique

Mozambique Myanmar

Myanmar Namibia

Namibia Nauru

Nauru Nepal

Nepal Netherlands

Netherlands Netherlands Antilles

Netherlands Antilles New Caledonia

New Caledonia New Zealand

New Zealand Nicaragua

Nicaragua Niger

Niger Nigeria

Nigeria Niue

Niue Norfolk Island

Norfolk Island Northern Mariana Islands

Northern Mariana Islands Norway

Norway Oman

Oman Pakistan

Pakistan Palau

Palau Palestinian Territory

Palestinian Territory Panama

Panama Papua New Guinea

Papua New Guinea Paraguay

Paraguay Peru

Peru Philippines

Philippines Pitcairn

Pitcairn Poland

Poland Portugal

Portugal Puerto Rico

Puerto Rico Qatar

Qatar Reunion

Reunion Romania

Romania Russian Federation

Russian Federation Rwanda

Rwanda Saint Barthélemy

Saint Barthélemy Saint Helena

Saint Helena Saint Kitts And Nevis

Saint Kitts And Nevis Saint Lucia

Saint Lucia Saint Martin

Saint Martin Saint Pierre And Miquelon

Saint Pierre And Miquelon Saint Vincent And The Grenadines

Saint Vincent And The Grenadines Samoa

Samoa San Marino

San Marino Sao Tome And Principe

Sao Tome And Principe Saudi Arabia

Saudi Arabia Senegal

Senegal Serbia

Serbia Seychelles

Seychelles Sierra Leone

Sierra Leone Singapore

Singapore Slovakia

Slovakia Slovenia

Slovenia Solomon Islands

Solomon Islands Somalia

Somalia South Africa

South Africa South Georgia & The South Sandwich Islands

South Georgia & The South Sandwich Islands Spain

Spain Sri Lanka

Sri Lanka Sudan

Sudan Suriname

Suriname Svalbard And Jan Mayen

Svalbard And Jan Mayen Swaziland

Swaziland Sweden

Sweden Switzerland

Switzerland Syrian Arab Republic

Syrian Arab Republic Taiwan

Taiwan Tajikistan

Tajikistan Tanzania

Tanzania Thailand

Thailand Timor-leste

Timor-leste Togo

Togo Tokelau

Tokelau Tonga

Tonga Trinidad And Tobago

Trinidad And Tobago Tunisia

Tunisia Turkey

Turkey Turkmenistan

Turkmenistan Turks And Caicos Islands

Turks And Caicos Islands Tuvalu

Tuvalu Uganda

Uganda Ukraine

Ukraine United Arab Emirates

United Arab Emirates United Kingdom

United Kingdom United States

United States United States Minor Outlying Islands

United States Minor Outlying Islands Uruguay

Uruguay Uzbekistan

Uzbekistan Vanuatu

Vanuatu Venezuela

Venezuela Viet Nam

Viet Nam Virgin Islands, British

Virgin Islands, British Virgin Islands, U.s.

Virgin Islands, U.s. Wallis And Futuna

Wallis And Futuna Western Sahara

Western Sahara Yemen

Yemen Zambia

Zambia Zimbabwe

Zimbabwe